Aws athena vs redshift5/29/2023

Parquet format is up to twice as fast to unload and consumes up to six times less storage in Amazon S3, compared with text formats. Parquet is an efficient open columnar storage format for analytics. Unloading data from Amazon Redshift to Amazon S3Īmazon Redshift allows you to unload your data using a data lake export to an Apache Parquet file format. The Orders table has the following columns: Column To demonstrate the process performed by the company, we use the industry-standard TPC-H dataset provided publicly by the TPC organization.

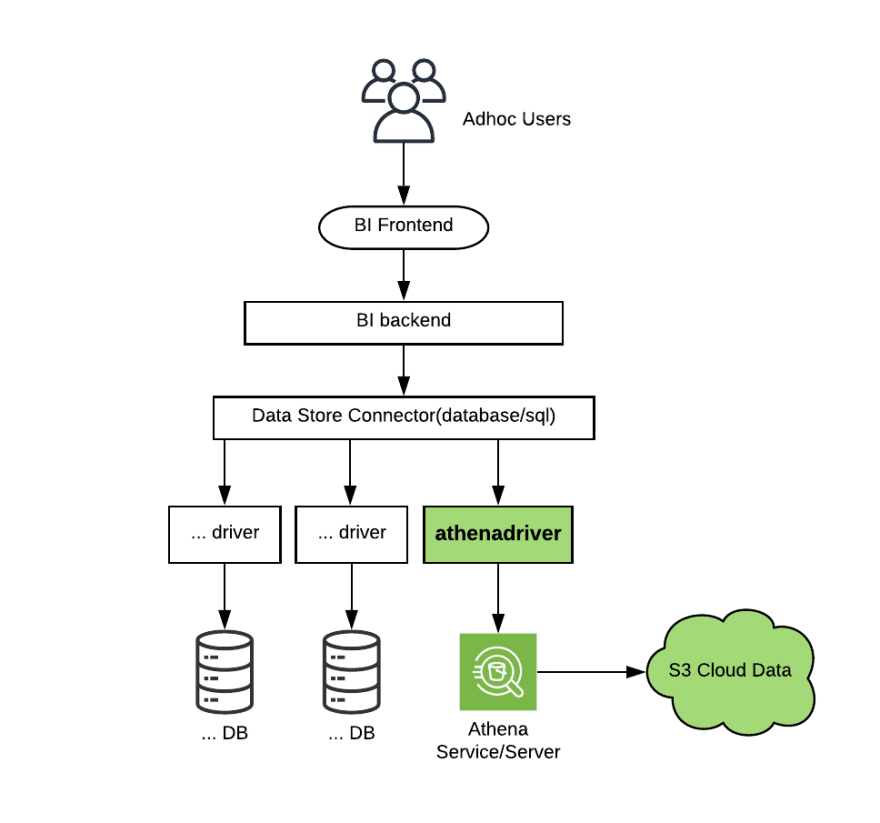

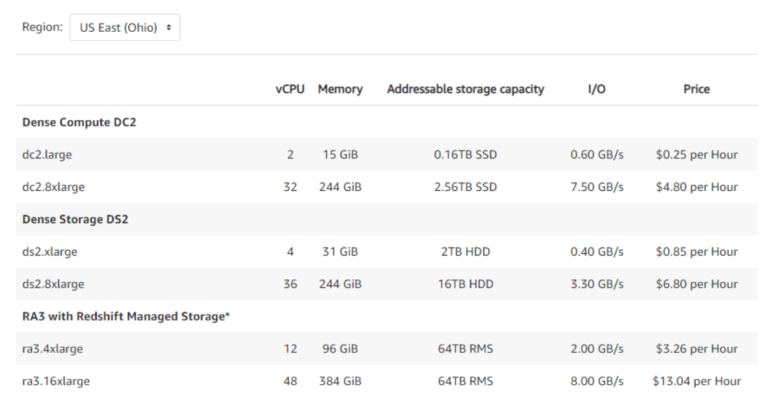

For more information, see IAM policies for Amazon Redshift Spectrum and Setting up IAM Permissions for AWS Glue. The appropriate AWS Identity and Access Management (IAM) permissions for Amazon Redshift Spectrum and AWS Glue to access Amazon S3 buckets.The following AWS services and access: Amazon Redshift, Amazon S3, AWS Glue, and Athena.To complete this walkthrough, you must have the following prerequisites: Query Amazon Redshift and the data lake with Amazon Redshift Spectrum.Create an AWS Glue Data Catalog using an AWS Glue crawler.Unload data from Amazon Redshift to Amazon S3.The solution includes the following steps: The following diagram illustrates the solution architecture. Using AWS Glue to crawl and catalog the data.Instituting a hot/cold pattern using Amazon Redshift Spectrum.Unloading data into Amazon Simple Storage Service (Amazon S3).In this post we demonstrate how the company, with the support of AWS, implemented a lake house architecture by employing the following best practices: The proposed solution implemented a hot/cold storage pattern using Amazon Redshift Spectrum and reduced the local disk utilization on the Amazon Redshift cluster to make sure costs are maintained. The cleanup operations, however, created a larger operational footprint. The high storage utilization necessitated ongoing cleanup of growing tables to avoid purchasing additional nodes and associated increased costs. They wanted a way to extend the collected data into the data lake and allow additional analytical teams to access more data to explore new ideas and business cases.Īdditionally, the company was looking to reduce their storage utilization, which had already reached more than 80% of their Amazon Redshift cluster’s storage capacity.

You can also use a data lake with ML services such as Amazon SageMaker to gain insights.Ī large startup company in Europe uses an Amazon Redshift cluster to allow different company teams to analyze vast amounts of data. You can also query structured data (such as CSV, Avro, and Parquet) and semi-structured data (such as JSON and XML) by using Amazon Athena and Amazon Redshift Spectrum. With a data lake built on Amazon Simple Storage Service (Amazon S3), you can easily run big data analytics using services such as Amazon EMR and AWS Glue. The best solution for all those requirements is for companies to build a data lake, which is a centralized repository that allows you to store all your structured, semi-structured, and unstructured data at any scale. Companies are looking to access all their data, all the time, by all users and get fast answers. However, as data continues to grow and become even more important, companies are looking for more ways to extract valuable insights from the data, such as big data analytics, numerous machine learning (ML) applications, and a range of tools to drive new use cases and business processes. Many companies today are using Amazon Redshift to analyze data and perform various transformations on the data. Amazon Redshift is a fast, fully managed, cloud-native data warehouse that makes it simple and cost-effective to analyze all your data using standard SQL and your existing business intelligence tools.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed